The Identity Trap: Why Smart People Reject AI Innovation

The people most likely to reject a new technology aren't the ones who don't understand it.

They're the ones who understand the old way better than anyone.

I've watched this play out dozens of times in engineering teams, in boardrooms, in entire industries. A new technology arrives. The people with the least invested in the status quo adopt it fast. The people with the most expertise in the current way of doing things become its fiercest critics.

We assume that's because experts see problems others miss. Sometimes that's true. But more often, what looks like rigorous analysis is something else entirely: the brain protecting an identity it spent decades building.

Steven Bartlett captures this in "The Diary of a CEO": the innovations that mattered most in history drew the harshest criticism, not on technical grounds, but because they undermined people's sense of competence and status.

The objection isn't technical. It's existential.

When "Quality Concerns" Are Really Identity Concerns

I see this most clearly in engineering.

A company introduces an AI coding assistant. Some developers adopt it immediately; they ship faster, iterate quicker, focus on architecture instead of boilerplate. Others dig in. They surface every edge case where the AI output is imperfect. They draft detailed Slack essays about maintainability risks. They request more evaluation time.

It looks responsible. It feels responsible. But I've learned to ask a different question: is this person evaluating a tool, or defending a worldview?

For many engineers, professional identity is inseparable from craft. You are the person who writes elegant code. Who debugs what others can't. Who earned respect through thousands of hours of deep technical work. When a machine replicates 70% of that output in seconds, the emotional response isn't excitement. It's threat.

I've felt it myself. Not specifically with coding tools, but in other moments when someone proposes something that makes my accumulated knowledge feel less central. There's a physical sensation, a tightening, before any rational assessment begins. That's not analysis. That's the immune system of the ego activating.

Engineers who thrive with AI haven't abandoned their standards. They've relocated their identity. Their value isn't in typing code; it's in knowing what to build and why. That shift sounds simple. It's one of the hardest psychological transitions a professional can make.

The Same Resistance, Different Departments

This pattern isn't unique to engineering. It shows up wherever expertise meets disruption.

In recruitment, talent leaders pushed back on AI screening for years. The stated reason: algorithms can't replicate human judgment. The unstated reason: if software can evaluate 500 CVs in the time a senior recruiter reviews 20, what does that say about 15 years of pattern-recognition skills? The recruiters who made the leap discovered something unexpected: they became more valuable. Free from resume filtering, they now spend time on interviews, candidate relationships, and cultural assessment. The work that actually requires human judgment.

In sales, automation met fierce resistance from leaders who built reputations on personal relationships. "You can't systematize trust," they argued. Meanwhile, their teams were burning 6+ hours weekly updating CRMs and writing follow-up emails – the administrative opposite of relationship-building. What they were protecting wasn't the human element of sales. It was the mystique of "knowing your territory" in ways no dashboard could capture.

In operations, the refrain is always the same: "Our processes are too unique. Too many edge cases. You'd have to understand our business." In practice, roughly 80% of most operational workflows follow repeatable patterns. The 20% that requires judgment is what gives the operations manager their irreplaceable status. Automating the predictable work doesn't eliminate that person; it concentrates their role on the decisions that actually matter. But accepting that means redefining what makes you valuable.

Across entire industries, the cycle repeats at macro scale. Banking executives declared neobanks would never secure licences, then watched digital-first banks reach tens of millions of customers. Payments leaders called Banking-as-a-Service a "thin abstraction layer" until it became the default infrastructure for new financial products. Bankers dismissed Bitcoin as speculation and tulip mania. It became a trillion-dollar asset class, and the same institutions now compete to offer crypto custody. Stablecoins were labeled "crypto noise"; then Stripe made its biggest acquisition ever for stablecoin infrastructure, Visa began piloting stablecoin settlement, and the US established its first federal framework for payment stablecoins.

Each time, the timeline from "never going to happen" to "strategic priority" compressed. It's now roughly 18 months.

The Paradox of Deep Expertise

What makes the identity trap particularly insidious is that it targets the most capable people.

If you've spent two decades mastering a domain, a disruptive technology doesn't register as an opportunity. It registers as an attack on everything your career represents: your knowledge base, your professional network, your standing in the room.

One idea from Bartlett that I keep returning to: the fiercer the criticism a technology attracts, the stronger the signal that it might matter. An innovation that threatens someone's position is probably significant enough to change something.

Backlash intensity tends to track disruption magnitude.

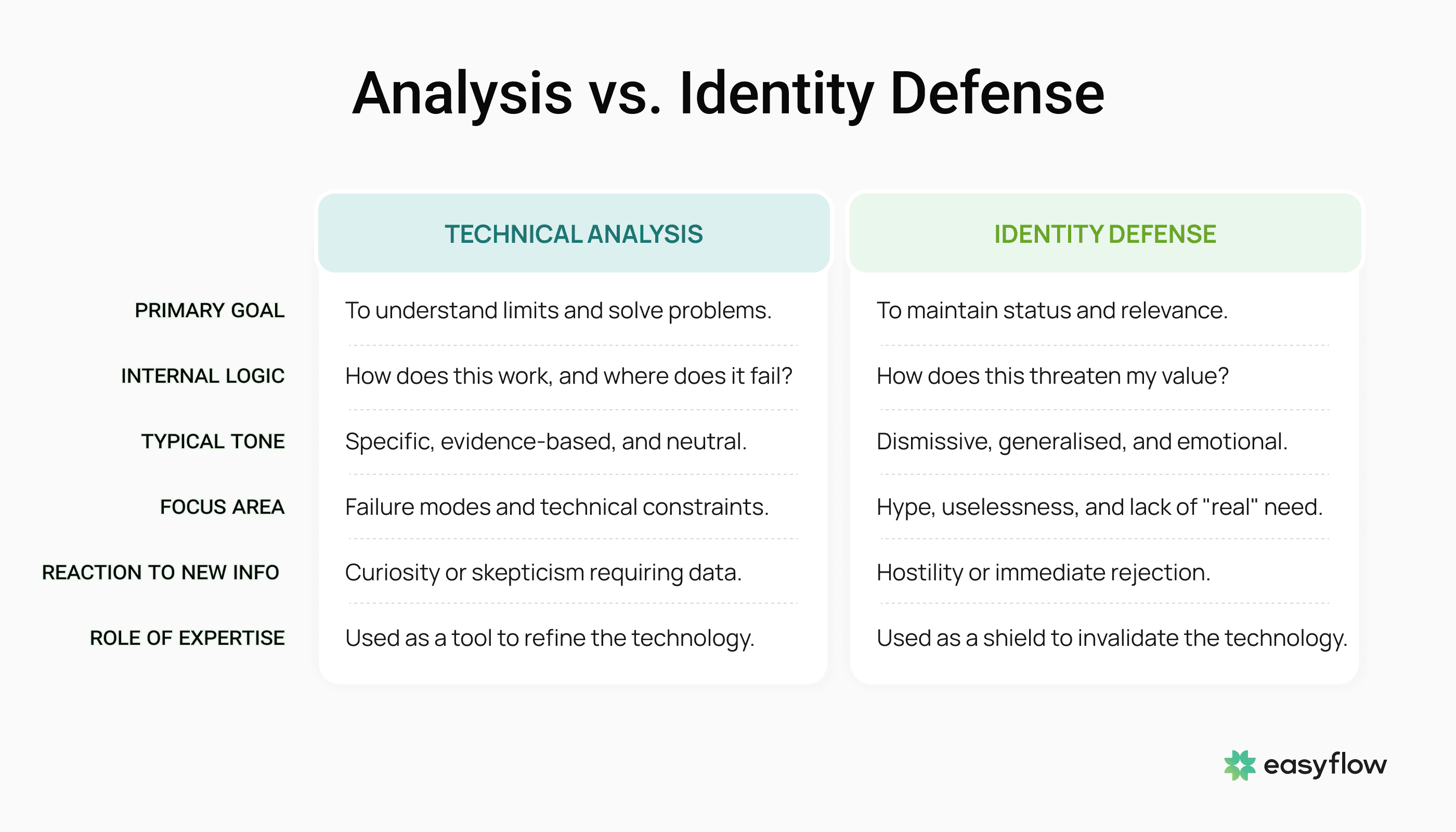

That said, not every controversial technology succeeds. Genuinely flawed ideas deserve scrutiny. The distinction that matters is between two types of criticism:

One sounds like, "Here are the specific technical constraints and failure modes I've identified."

The other sounds like, "This is overhyped. It doesn't work. Nobody actually needs this."

The first is analysis. The second is a psychological defense mechanism wearing the costume of expertise.

How Cognitive Dissonance Fuels the Trap

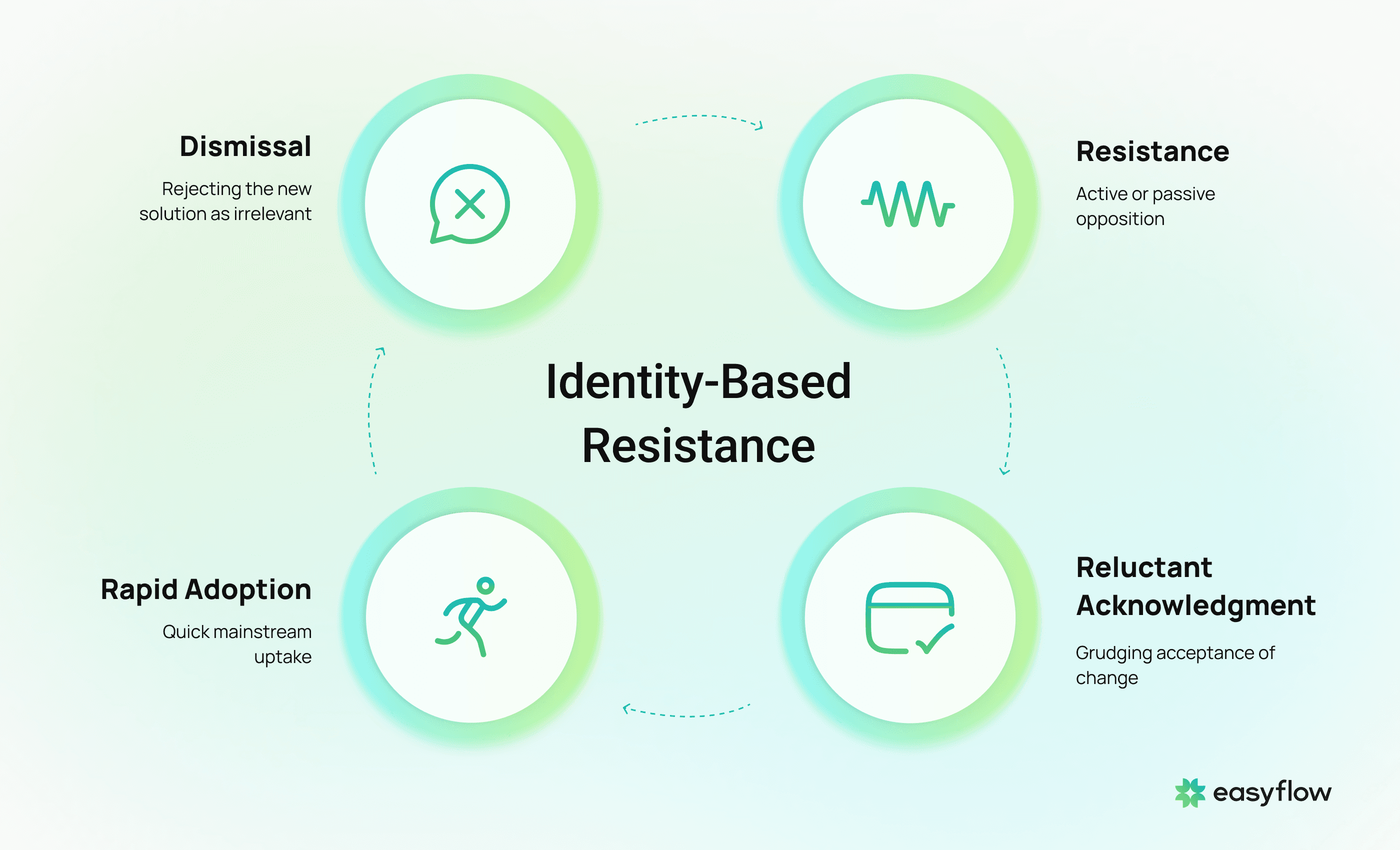

The underlying engine is cognitive dissonance – the mental discomfort when new evidence contradicts a core belief.

When a technology challenges something we've woven into our professional identity, the brain faces a choice.

Path A is to revise the belief. This is genuinely painful. It means conceding that skills you spent years developing may carry less weight going forward. It means a period of feeling incompetent. It means, in some sense, starting over.

Path B is to discredit the evidence. This is effortless by comparison. Every emerging technology has imperfections: find them. Every disruption has skeptics with credentials: amplify them. Assemble enough counter-evidence and the result looks like careful evaluation. It's not. It's a defense structure built from confirmation bias.

What we label as thorough due diligence is frequently sophisticated self-protection.

The acceleration of technological change makes this more costly every year. Cycles that once unfolded over a decade now complete in 18 months. The gap between "this will never work" and "this is table stakes" is narrowing fast.

Holding onto identity-based positions is getting exponentially more expensive.

Four Practices That Help Me Stay Honest

I catch myself in the identity trap regularly. These aren't permanent fixes; they're pattern interrupts.

Checking the source of my resistance. Strong negative reactions to new ideas get a pause. I ask myself whether I'm assessing the concept or defending my relevance. When it's the latter, and it often is, I commit to more exploration before forming a position. Not because the idea deserves the benefit of the doubt. Because my initial reaction doesn't deserve my trust.

Paying attention to who objects loudest. When criticism of a technology concentrates among the people whose business models or expertise it most directly threatens, that's a signal worth investigating. Disproportionate anger from incumbents is data, not noise.

Running the 18-month scenario. I ask: if this technology delivers on its promise, what does my industry look like in a year and a half? When the honest answer is "unrecognizably different," that's my cue to go deeper regardless of my comfort level.

Keeping it first person. The easiest version of this conversation is pointing at other people's blind spots. The more useful version is admitting that everyone, including me, carries identity-driven biases. The only variable is whether we notice them before they become expensive.

What This Means for Leaders

If you're leading a growing company today, you're navigating several disruptions simultaneously. AI is changing how teams operate. Automation is restructuring workflows. Tools that defined your competitive edge three years ago are approaching obsolescence.

Every one of these shifts will activate identity-based resistance somewhere inside your organization. Among your engineers. Your sales team. Your operations staff. Your executive team. In your own decision-making.

The leaders who handle this well won't be the ones who chase every new technology. They'll be the ones who develop the judgment to distinguish genuine technical skepticism from identity defense masquerading as professional caution.

That ability to tell the difference – that's the leadership skill that matters most right now.

Everyone resists. The question is whether you recognize it before it costs you.

Want to move past resistance and actually automate your team's repetitive work?

Most teams don't resist automation because it won't work; they resist it because it challenges how they see their role. Easyflow builds custom AI agents that handle the predictable 80% of your workflows, so your people can focus on the judgment calls that genuinely need them. 2-4 week setup. No technical staff required.

Book Demo

Posted by

Yura Gnatyuk

CEO